Absolute Zero Downtime with Fully Scalable headless WordPress Website

Pages take a long time to load, network connections time out, and your servers begin to creak under the strain. Congratulations on reaching scale for your web app!

So what's next? You must keep everything online and desire a fast user experience – after all, speed is a feature.

What is meant by Scaling?

The internet is full of resources that teach you how to code and build websites and web applications, but it is lacking in resources that teach you how to make your website scalable and fault-tolerant so that it can handle thousands of users without experiencing performance issues.

We'll take a look at a standard architecture before discussing how to make our web app scalable and fault-tolerant.

Just one server listens for client requests and interacts with the database in web architecture. That's what there is to it; there's nothing more to it.

Why is web scaling important?

Many scaling bottleneck solutions add ambiguity, abstraction, and indirection, making systems more difficult to reason about.

This can lead to several issues, including:

- It takes longer to add new features.

- Testing code can be difficult.

- It's more difficult to find and repair bugs.

- It's more difficult to balance local and development environments.

When we look at the websites of frontline firms, we don't really realize how much work they have to do. We don't consider the value of high efficiency and usability for the company's performance.

As a result, when newcomers begin creating their first websites, they are unaware of certain issues that might arise later:

- The output of the website suffers as the number of tourists rises, and the number of failures rises as well.

- The length of time it takes for a page to load decreases as the product selection expands. It's difficult to keep an e-commerce store's inventory up to date.

- Changing the code structure becomes risky and overly complicated.

- Introducing a new product or service takes too long and costs too much money, and the ability to conduct A/B tests decreases.

As a consequence, if the issue isn't fixed and the constraints remain, the company would have squandered opportunities.

But the question may arise; Do such issues necessarily arise on all websites?

The majority of them deal with such issues to some degree. The degree of their sophistication, however, is determined by how well the web applications were built from the start.

How is a headless WordPress website more scalable?

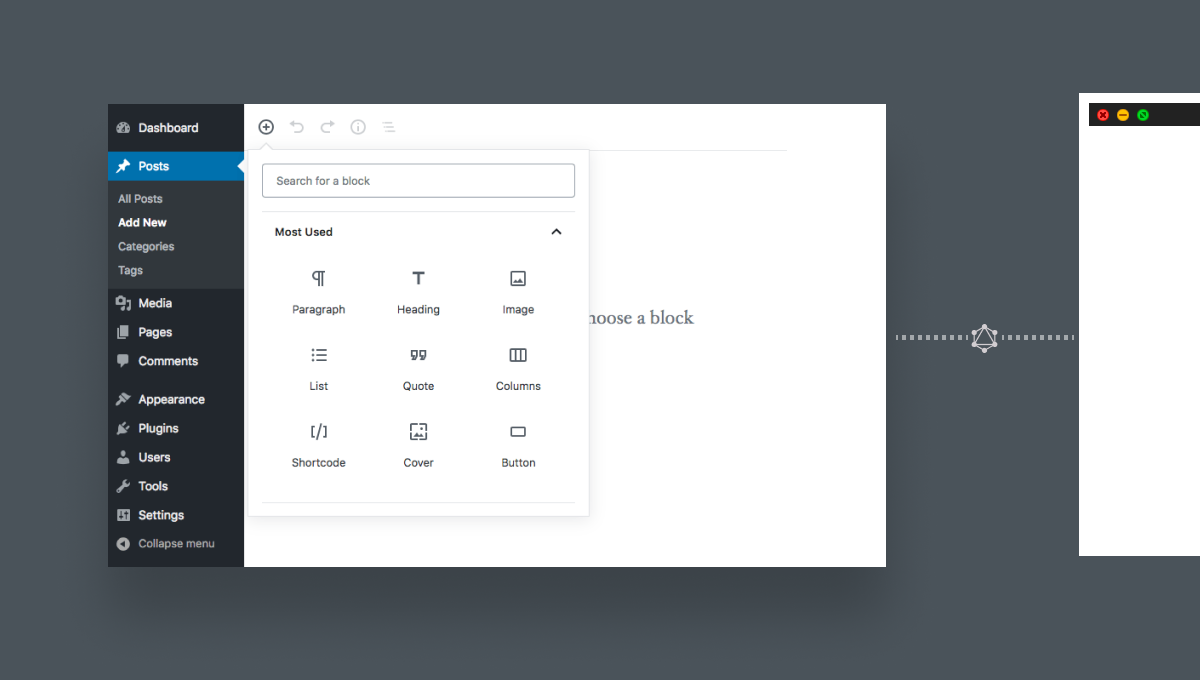

A headless CMS, on the other hand, has no default front-end, allowing content owners to choose how they want their content to be shown to their viewers. To put it another way, imagine a typical CMS as a body, with the front-end layer that means the template and framework serving as the "front," resulting in a headless CMS.

- The CMS becomes a content data centre where your content will sit comfortably waiting to be released after the front-end distribution layer is removed.

- Front-end developers will then build as many "heads" or custom integrations for your content as possible across as many platforms and devices as possible, resulting in an omnichannel experience across websites, mobile apps, smartwatches, VR/AR, and in-store billboards.

- Those pieces of content are then publishable, with the headless CMS delivering content through an API. All of this is made possible by the JAMstack architecture.

Hosting via CDN

At your fingertips, you can create, maintain, and reuse content and campaign pages. By design, a headless CMS like headless WordPress is designed for speed and scalability, making it simple for marketers to increase interaction and communicate independently.

Headless WordPress needs no maintenance from developers. Headless WordPress runs entirely in the cloud as a web application, implying that you will never need to update anything, as opposed to the update cycles required by conventional CMSs.

Because of the scalability provided by serverless hosting and CDN, your developers will never have to worry about performance or hosting issues. If your site receives too much traffic, for example, your host such as Netlify or Vercel will automatically deal with it and handle it. Also note that this could vary if you host your CDN/serverless hosting with AWS, Azure, or others.

Distributing traffic

Load balancers are used to spread traffic equally through the servers. The load balancer serves as a middleman between clients and servers, knowing the servers' IP addresses and thus being able to redirect traffic from clients to servers.

A load balancer may use a variety of methods to route traffic between servers, which sends requests to servers on a cyclical basis. If we have three servers, for example, the first request will be sent to server 1, the second to server 2, the third to server 3, and the fourth request will be sent back to server 1.

However, the most effective approach is for the load balancer to first determine if the server is capable of handling the request before sending it.

With only one load balancer to do the job, we run into the problem we discussed earlier: we have a single point of failure, and if this load balancer fails, we have no backup. To solve this problem, we can set up two or three load balancers, one of which will actively route traffic while the others will serve as a backup.

Load balancers may take the form of physical hardware or software running on one of the servers. Today, with cloud services at our fingertips, setting up a load balancer is relatively inexpensive and easy.

Conclusion

In this century, scalability is a critical constraint since many companies are based on the internet in order to reach a global audience. We've presented a new approach to web server scaling as well as other techniques.

The new technique differs from current techniques in that it achieves high request handling ability of a server by using minimal caches and improving load balancing techniques. Since cache operations are expensive, they should be maintained when the server's load is less than its capacity. We divided the entire architecture into two layers in order to save money.

Categories

- Headless WordPress Themes